Image to editor

Finish images inside the video editor

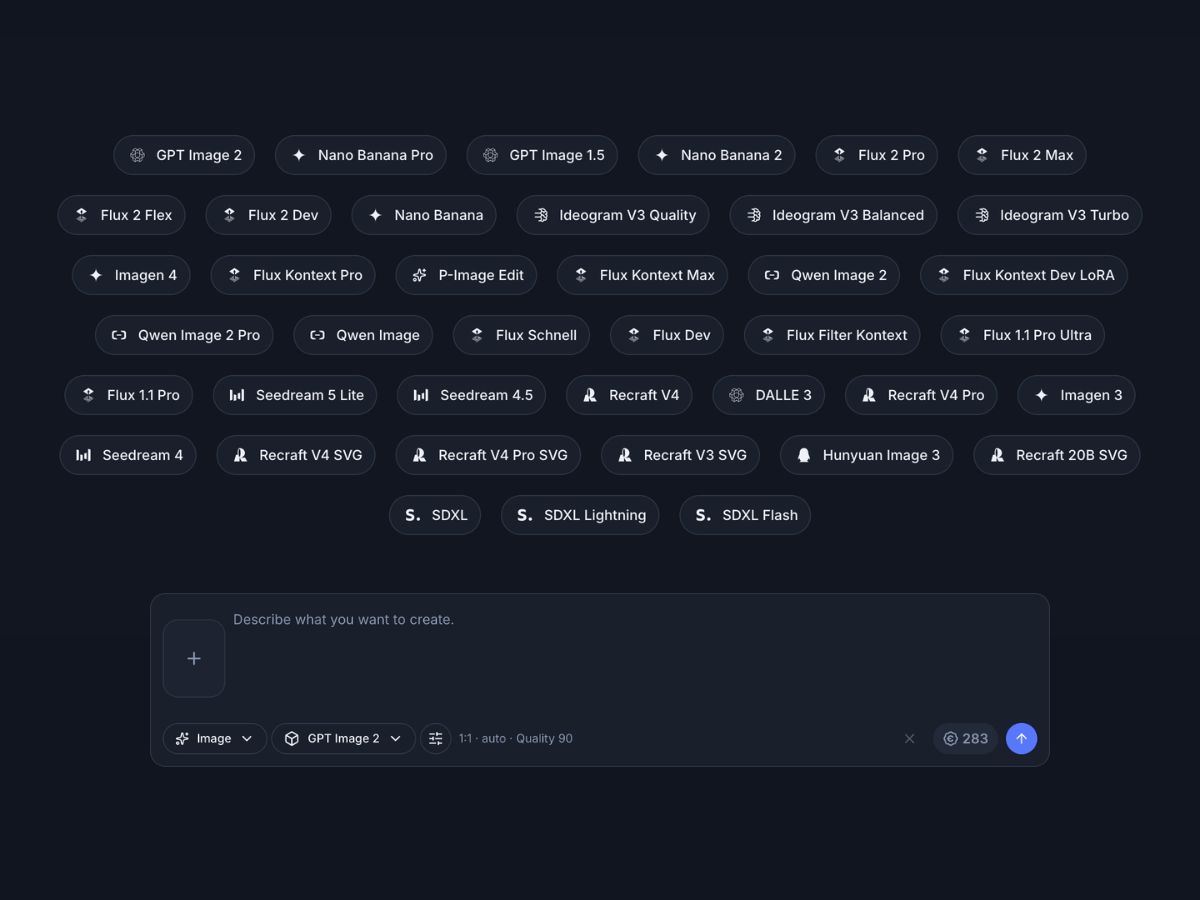

Move approved images into the Indream editor when a visual needs captions, text, brand details, timing, or export versions for different channels.

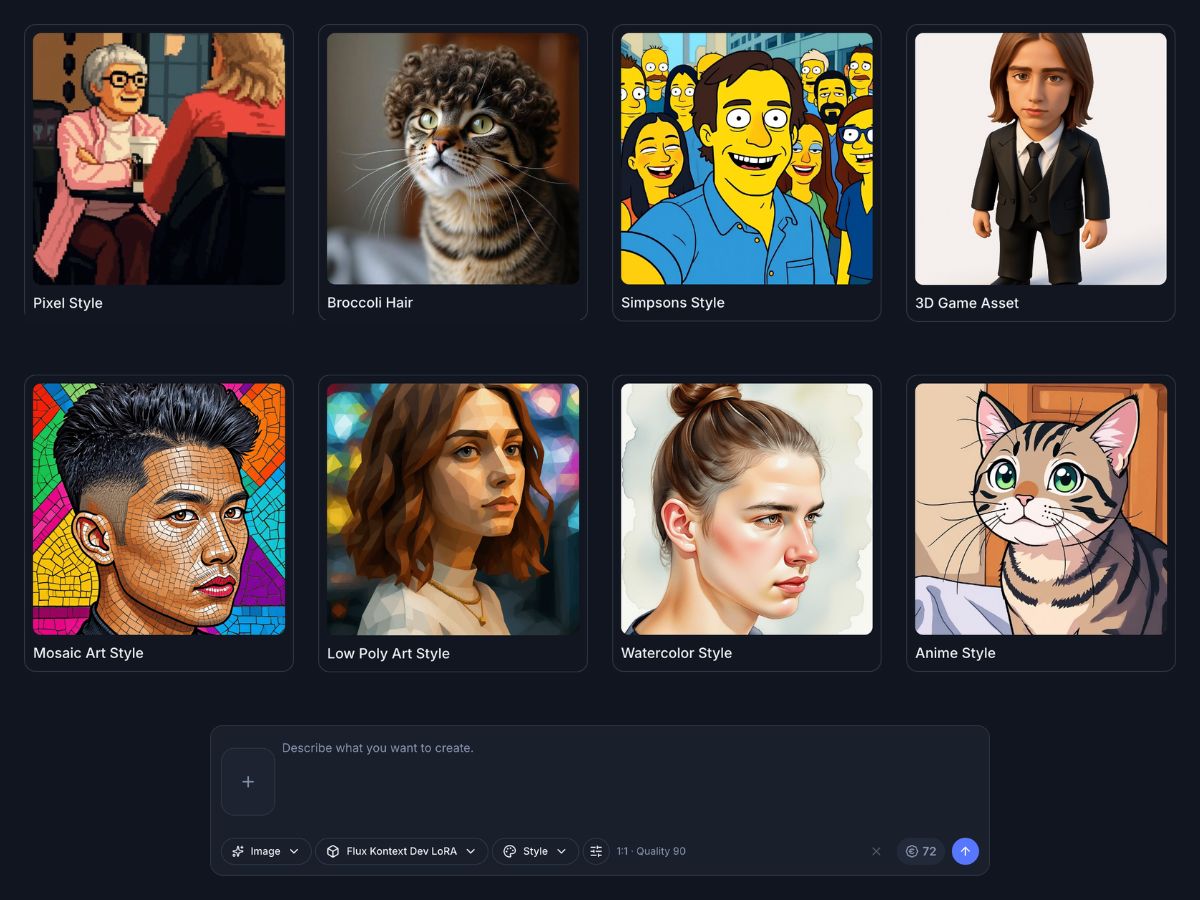

Add captions, text overlays, product notes, or brand details

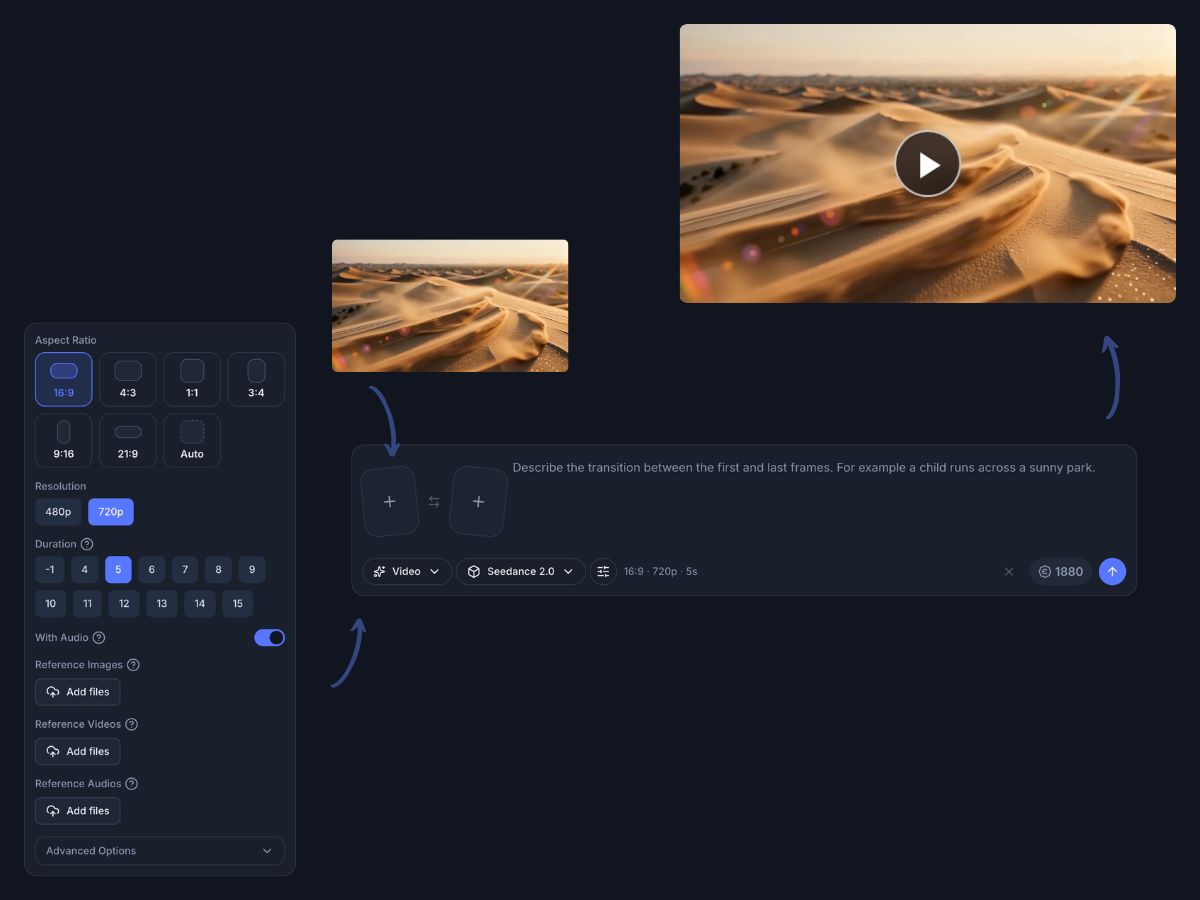

Place generated images beside clips, charts, audio, or supporting visuals

Export polished versions for posts, ads, product pages, or presentations