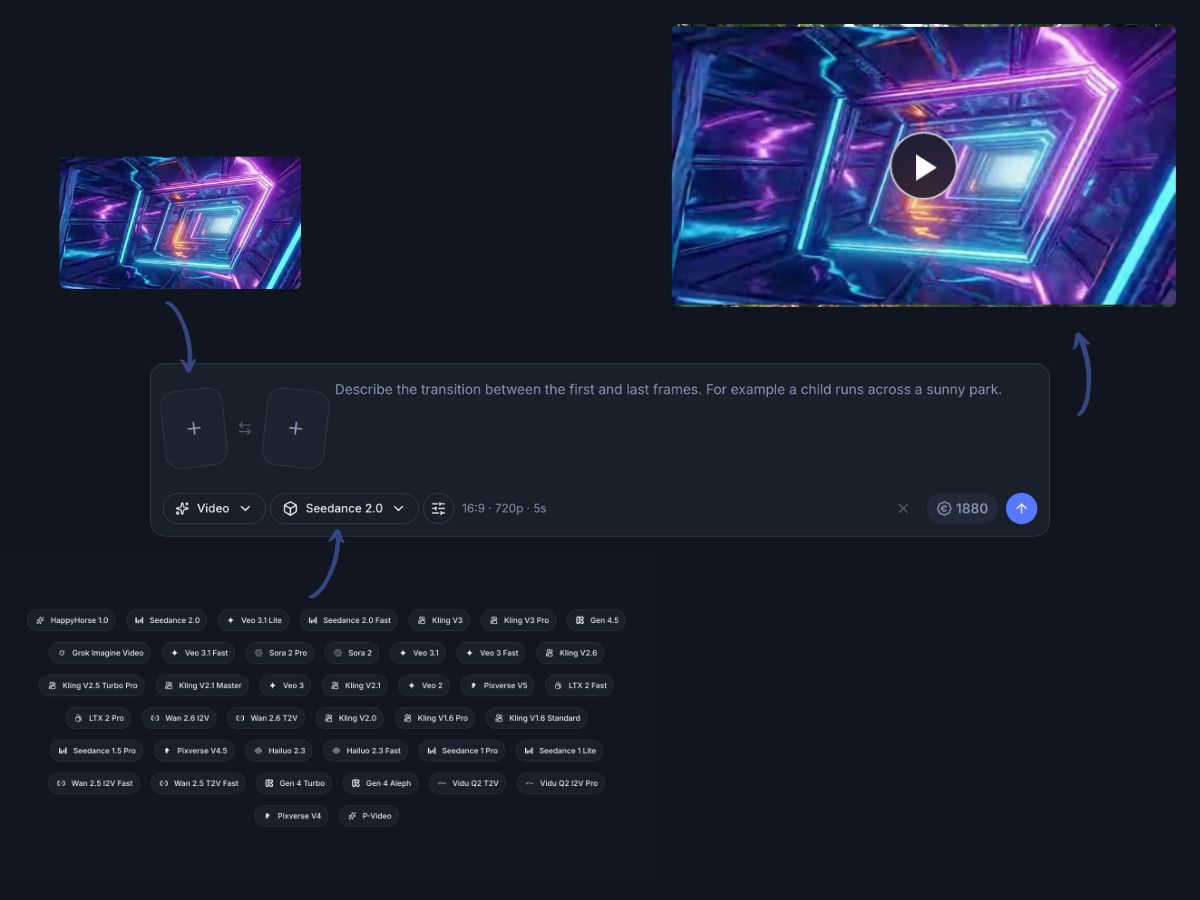

Model catalog

Switch between Veo, Kling, Sora, HappyHorse, Seedance, and more in one place

Different video tasks call for different model strengths. The AI Video Generator keeps Veo, Kling, Sora, HappyHorse, Seedance, Runway, and more accessible from a single surface so every generation starts with the right fit.

Choose text to video, image guided, or video to video input paths by model

Compare results from different providers without rebuilding the project

Review credit estimates before each run so budget stays predictable