Native 1080p resolution

HappyHorse AI generates 3 to 15 second clips at 1080p resolution, so the visual detail holds up whether the output is used directly or edited further.

Turn prompts, images, reference visuals, or existing clips into short videos. HappyHorse AI helps you shape scenes, motion, style, and story from a simple idea.

HappyHorse AI focuses on short videos with clear motion, sharp detail, synced audio, and enough control to start from a prompt, image, reference, or clip.

HappyHorse AI generates 3 to 15 second clips at 1080p resolution, so the visual detail holds up whether the output is used directly or edited further.

Audio is generated alongside the video clip in a single pass, including synchronized dialogue, ambient sound, and Foley, without any separate post-processing step.

Dialogue can line up naturally across seven languages, making short character scenes, voice-led clips, and regional ideas easier to test.

Generate clips in 16:9, 9:16, 4:3, 3:4, or 1:1 so the first result can match social, ads, product pages, or presentation formats.

HappyHorse AI is recognized at the top of the Artificial Analysis Video Arena for both text-to-video and image-to-video generation.

Create short clips quickly enough to test scene ideas, product moments, and story beats without turning every attempt into a long production round.

Choose the HappyHorse AI path that matches what you have in hand: a sentence, an image, reference visuals, or an existing clip.

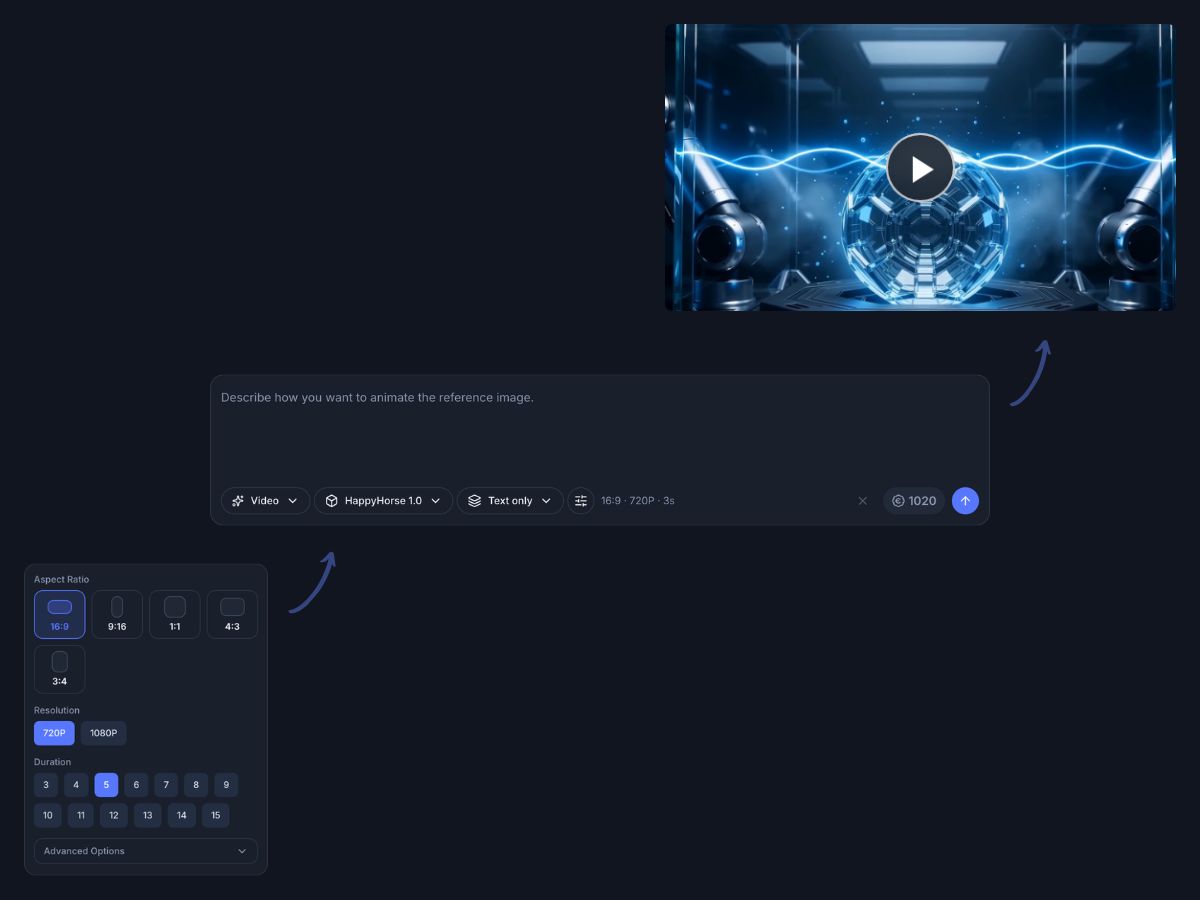

Prompt to video

Start HappyHorse AI with a written prompt when you want to explore a scene, product moment, story beat, or visual concept from scratch.

Describe the subject, action, setting, and mood

Shape camera movement and scene direction in plain language

Create short videos from a simple written idea

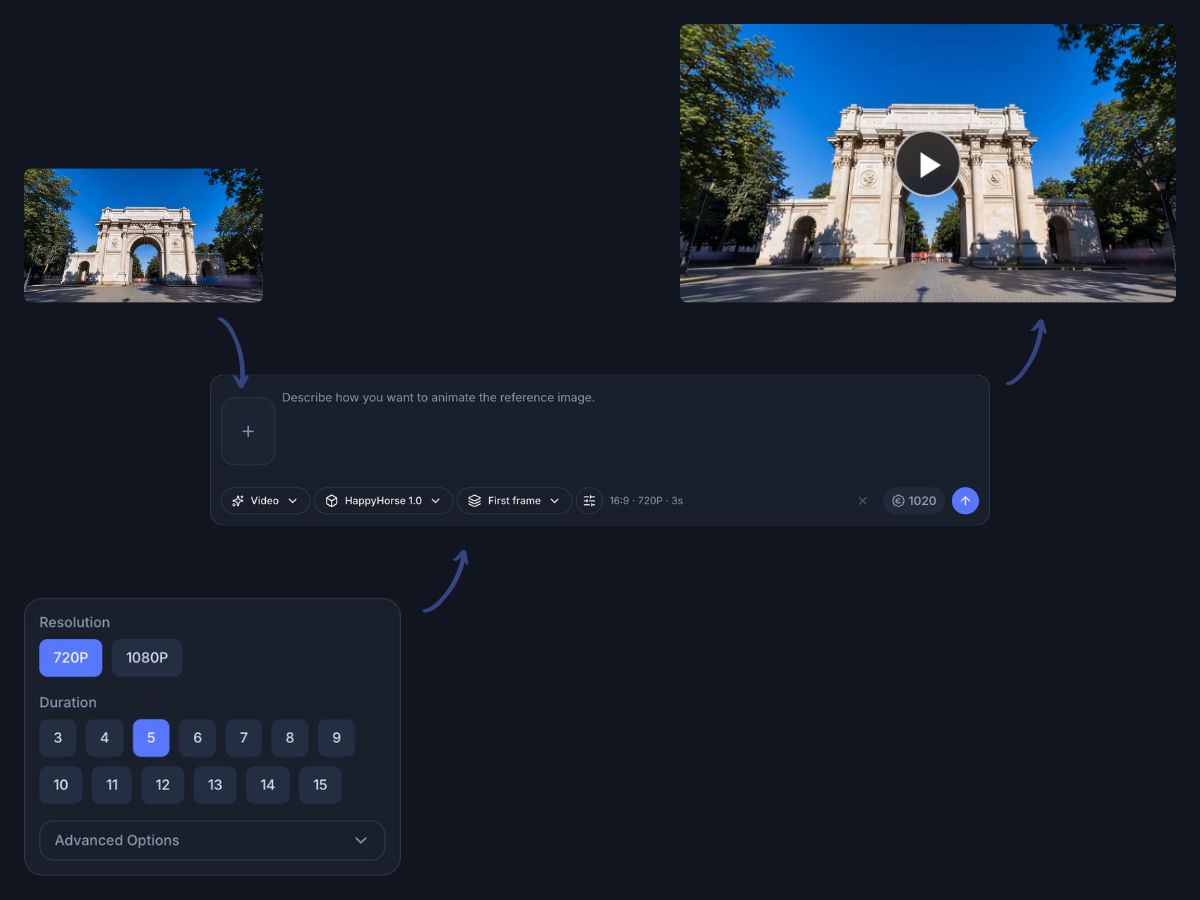

Image to video

Use HappyHorse AI with an image as the first frame when you want the video to start from a specific product, person, place, or design.

Animate product photos, concept art, posters, or character images

Keep the opening visual grounded in your chosen image

Add prompt details to guide the action and mood

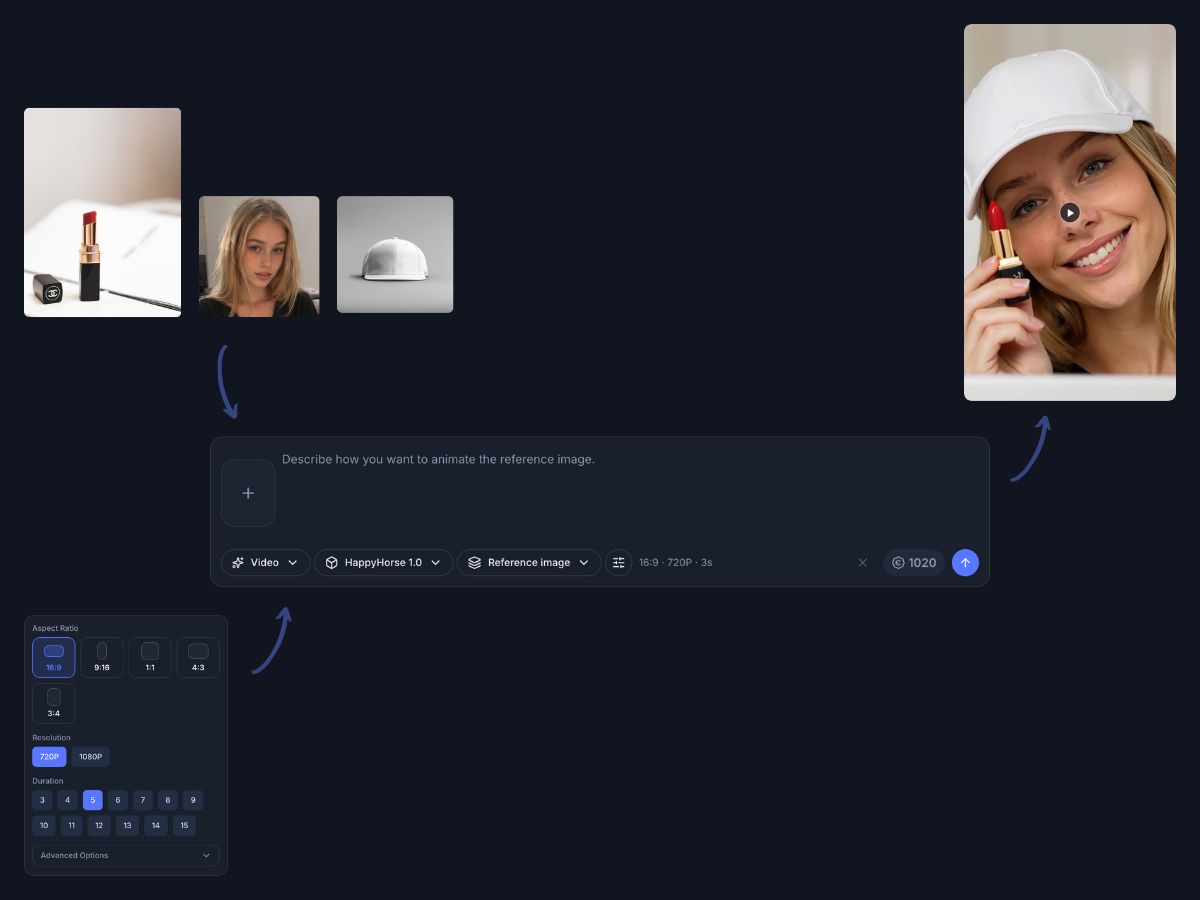

Reference to video

Add reference images in HappyHorse AI when you want the result to follow a visual direction, product detail, outfit, scene, or mood more closely.

Guide the subject, setting, color direction, or visual style

Use multiple references for richer creative direction

Keep the prompt simple while the images carry visual detail

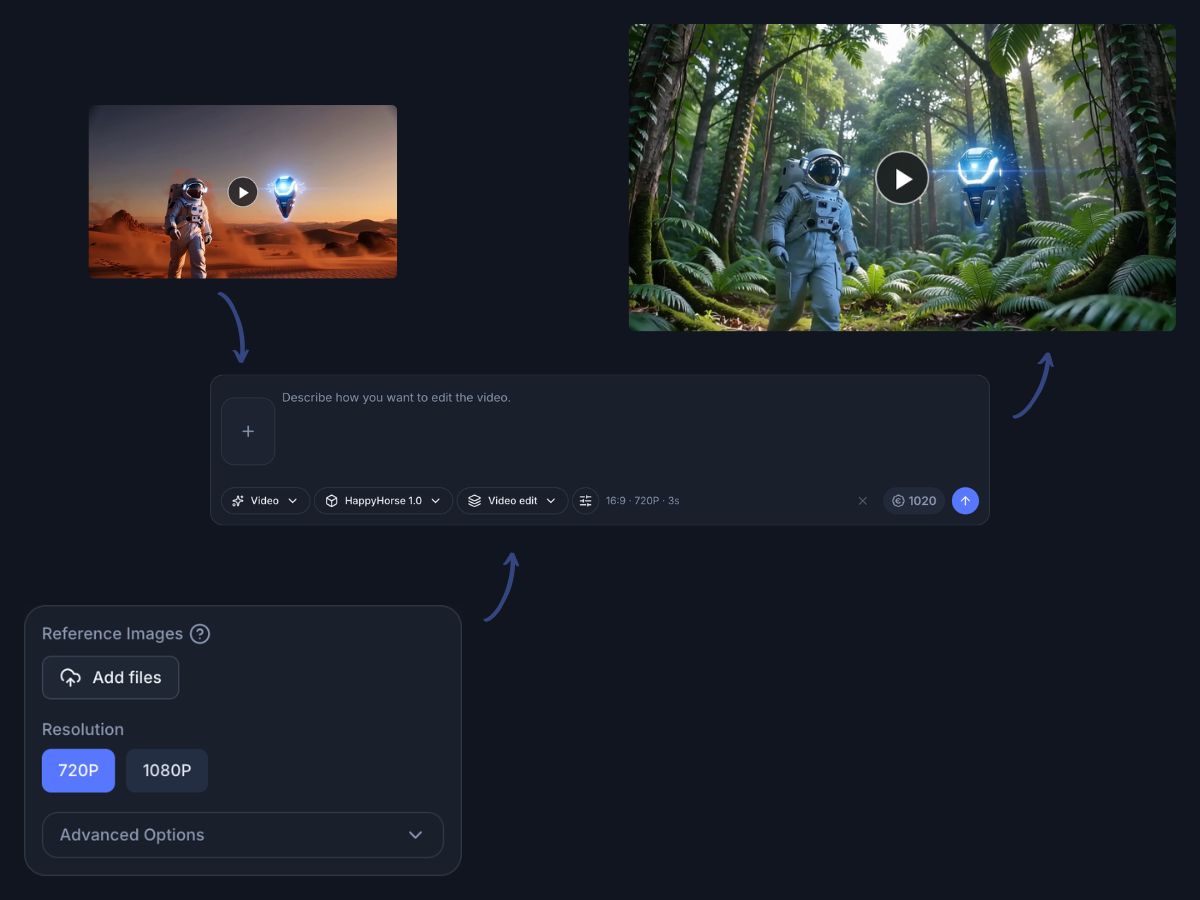

Video editing

Start from an existing video when the structure is useful, then describe the new direction you want HappyHorse AI to create.

Upload a clip and explain the change in everyday language

Add reference images for extra visual guidance

Use the original clip as a starting point for a new visual direction

HappyHorse AI is useful when you want a short video result with clear subject, movement, and visual direction.

HappyHorse AI is designed for clear short videos with native 1080p output.

Clips are generated at full 1080p without upscaling, keeping visual detail sharp in the final output.

Synchronized dialogue and lip-sync work across seven languages in one generation pass.

Artificial Analysis ranks HappyHorse at the top of its AI Video Arena results.

After HappyHorse AI creates the clip, use Indream to add publishing details: captions, text, brand cues, scene order, and channel-ready exports.

Turn the generated clip into a finished version for social posts, ads, product pages, or presentations. Add context, keep the pacing clear, and export the version each channel needs.

Add captions or text overlays so the message is clear without sound

Place brand details, product callouts, or supporting visuals

Arrange multiple HappyHorse AI clips into a short sequence

Export versions for social, ads, product pages, or presentations

Use HappyHorse AI when you need a short, expressive video for a campaign, post, product idea, or story moment.

Create short clips for Reels, Shorts, TikTok, and everyday social posts with movement and style.

Animate product shots, launch ideas, feature moments, and visual concepts for ecommerce or brand pages.

Explore quick campaign ideas, mood directions, product hooks, and visual storyboards before production.

Build cinematic moments, character scenes, environment ideas, and visual experiments from simple prompts.

Open HappyHorse AI, choose a starting point, describe the scene, and generate the short video.

Visit HappyHorse AI, then choose a prompt, image, reference visuals, or an existing video clip as your starting point.

Tell HappyHorse what should happen, how it should feel, and what visual direction to follow.

Create the video, then add small edits in Indream when the clip needs final polish.

Answers to common questions about creating videos with HappyHorse AI in Indream.

Start with a prompt, image, reference visuals, or clip, then generate a short HappyHorse AI video.

Create videos from prompts, images, and other visual inputs with Indream video generation tools.

Add captions, text, brand details, scene order, and export versions after your video is generated.