Audio to content

Turn a useful track into part of the final piece

AI Music Generator tracks often support a short video, explainer, ad, or product story. Indream keeps the audio close to the content it belongs with.

Choose among music models by task and control needs

Use lyrics, duration, instrumental, and output controls where supported

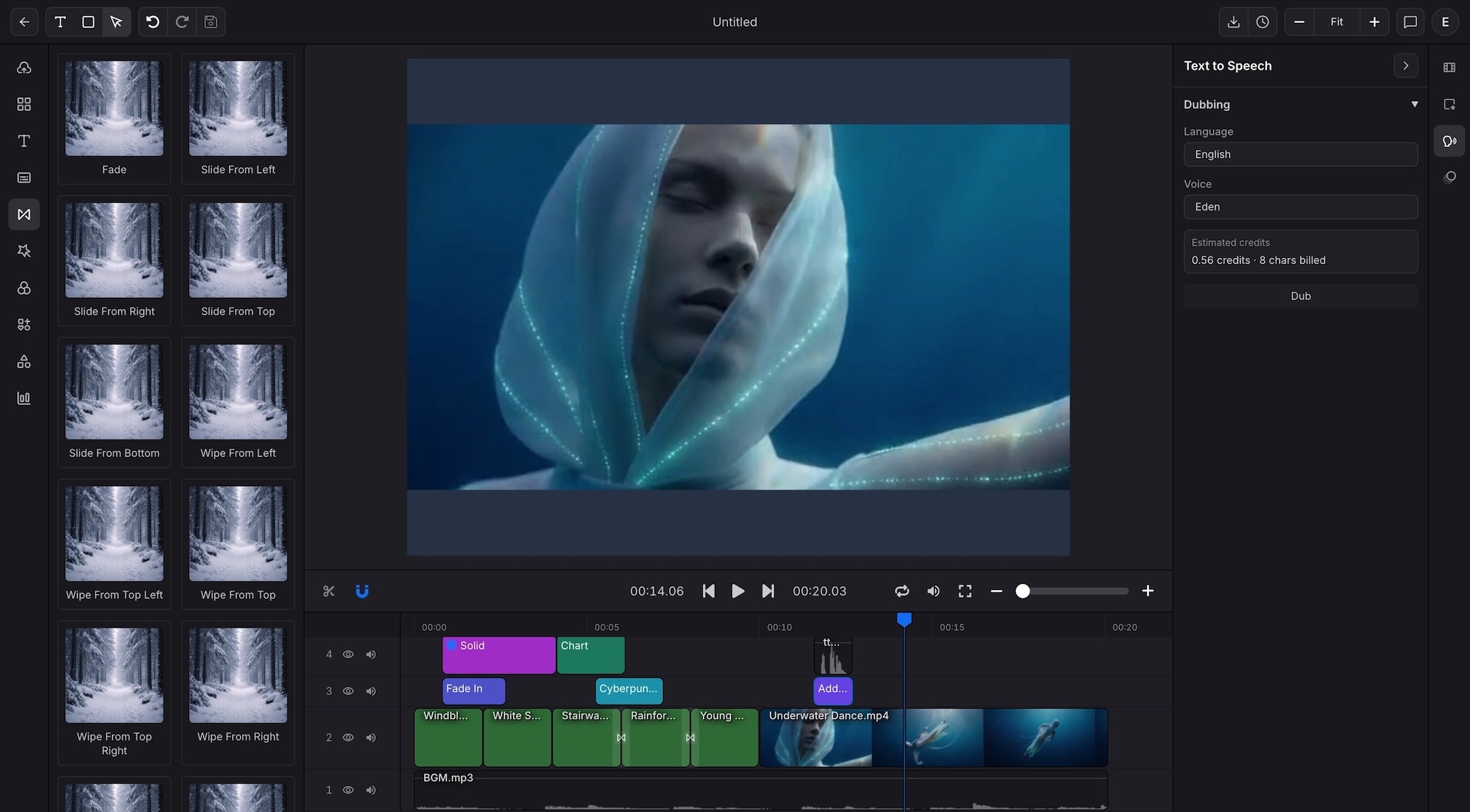

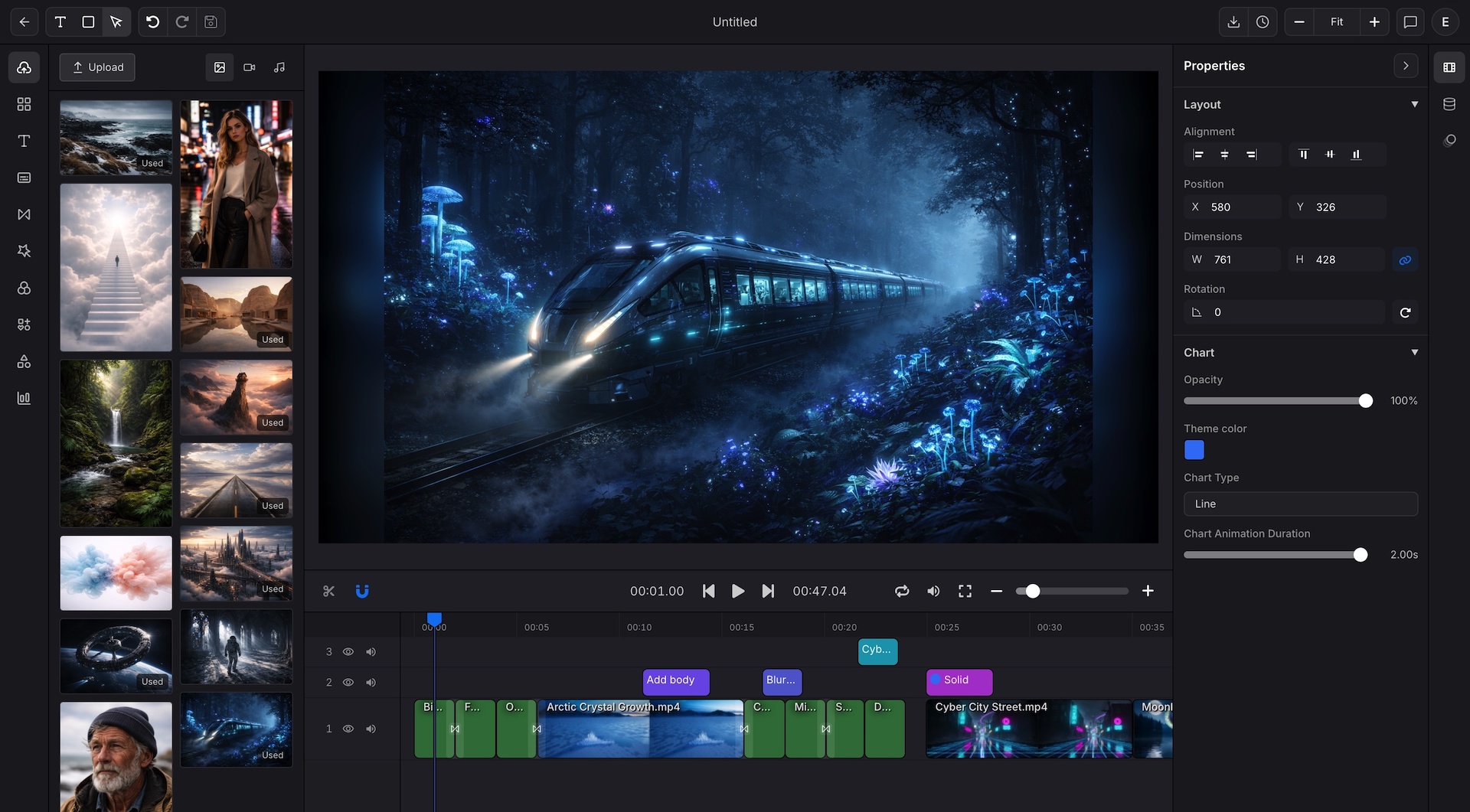

Bring useful results into a video project when timing matters